ABSTRACT

Automatic speech recognition (ASR) is a well-researched field of study aimed at augmenting the man-machine interface through interpretation of the spoken word. From in-car voice recognition systems to automated telephone directories, automatic speech recognition technology is becoming increasingly abundant in today’s technological world. Nonetheless, traditional audio-only ASR system performance degrades when employed in noisy environments such as moving vehicles.

To improve system performance under these conditions, visual speech information can be incorporated into the ASR system, yielding what is known as audio-video speech recognition (AVASR). A majority of AVASR research focuses on lip parameters extraction within controlled environments, but these scenarios fail to meet the demanding requirements of most real-world applications. Within the visual unconstrained environment, AVASR systems must compete with constantly changing lighting conditions and background clutter as well as subject movement in three dimensions.

This work proposes a robust still image lip localization algorithm capable of operating in an unconstrained visual environment, serving as a visual front end to AVASR systems. A novel Bhattacharyya-based face detection algorithm is used to compare candidate regions of interest with a unique illumination-dependent face model probability distribution function approximation. Following face detection, a lip-specific. Gabor filter-based feature space is utilized to extract facial features and localize lips within the frame. Results indicate a 75% lip localization overall success rate despite the demands of the visually noisy environment.

FACE DETECTION

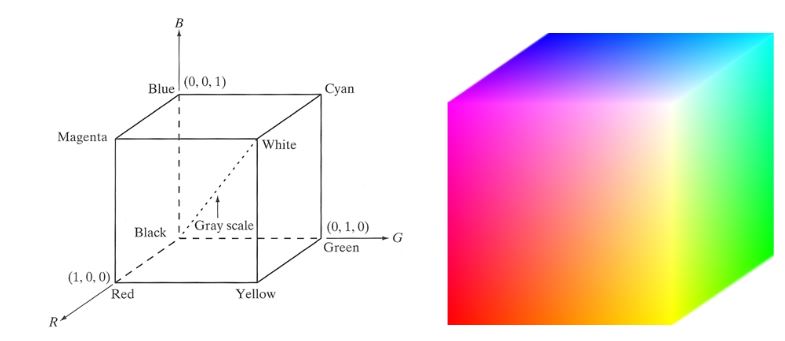

Figure 2.1: The RGB Color Cube

Chiefly used for its simplicity and compatibility with display devices, RGB color space does not separate chrominance (color) and illumination (brightness) information. Hence, variation in an object’s luminance alters each of the object’s color components. Throughout this report each color component within the RGB space will be confined to the range [0,1]. Figure 2.1 below illustrates the RGB color cube with the dotted line representing grayscale values extending linearly from the origin to the point [1,1,1].

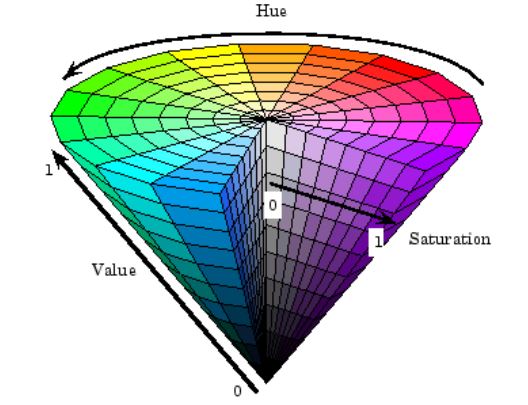

Figure 2.2: HSV Color Model

Figure 2.2 illustrates the conical HSV color model as described. The hue component exists on what is called a “color wheel” and is an angular measure ranging from 0° to 360° (or 0 to 2 π radians) with 0° representing a device-dependent red wavelength. Because it is an angular measure, the hue component under goes a wrap-around effect from 0° to 360°. Saturation and intensity are magnitude measures ranging from zero to one as discussed.

FEATURE EXTRACTION

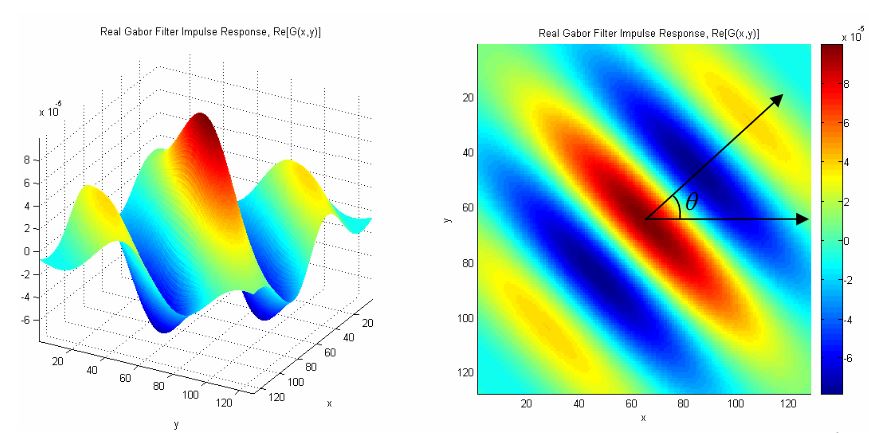

Figure 3.1: Sample Gabor Filter Impulse Response (Real Component)

Refer to Appendix B for a copy of all code used for algorithm development. Figure 3.1 on page 63 contains an example Gabor filter with the stated parameters as visualized in three-dimensions and as a surface and in its two-dimensional environment. Note that this figure displays only the real component of the filter, which is complex in nature.

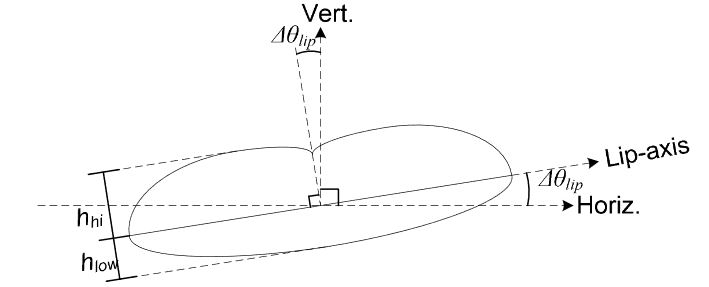

Figure 3.2: Lip Measurement Diagram

Refer to Figure 3.2 for a diagram of h ow these measurements were calculated. For clarity, any discussion of the lips axial dimension will refer to this diagram with the lip’s axis referring to the axiss tretching from lip corner to lip corner.

CONCLUSIONS AND FUTURE WORK

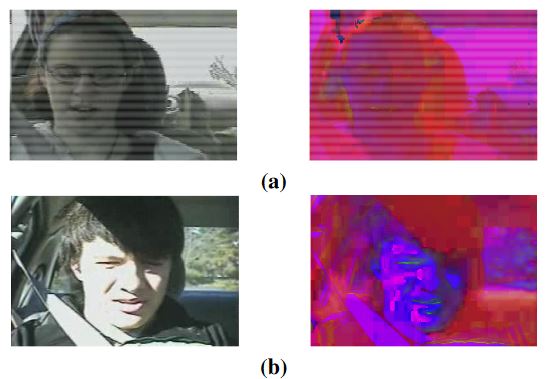

Figure 4.1: Original RGB and sHSV Images Displayed as RGB for (a) Underexposed (b) Overexposed Samples

Figure 4.1(a) and (b) contain sample images that re present underexposure and overexposure, respectively. Note the drastic deviations in skin chromatic values from (a) to (b) as well as the inconsistency in these values throughout the facial region in (b). These inconsistencies across facial regions can cause incomplete and inaccurate skin and face classification as well degradation of the final lip localization (refer to Figure 3.10(b) on page 85).

Source: California Polytechnic State University

Author: Robert Edward Hursig